03 - Format Sensitivity in LLM Speaker Simulation

Over the past two months I worked on a research paper comparing how formatting changes the results when LLMs are tasked to simulate speakers in group chats. This blog post is an overview of the paper. You can read the full paper here.

Motivation

I'm in a lot of group chats with LLMs on Discord. I've even built multiple harnesses of my own, such as Claudebot (no relation to "Clawdbot", I used the name first!). As an engineer building a Discord bot, there are a lot of decisions to make about how the chat will be presented to the LLMs over the API, and there's a lot of hearsay and anecdotes about how best to do this. My main motivation in this study was to do a rigorous comparison of different formats and catch at least a glimpse of why some are supposedly better than others.

Typically when I'm in a group chat with an LLM, the bot is "playing itself". For example, there will be a bot named "Claude Haiku 4.5" whose responses are generated by the Haiku 4.5 Anthropic API endpoint. I noticed that this situation often has a warmup effect, where the bot arrives confused about who they are and slowly figures out what it means to be "Claude Haiku 4.5" in the context of the chat.

To get around the multi-turn role confusion, I decided to instead study something else that preserves the format effects: simulation. The overall setup involves giving the LLM a group chat context where they're tasked to speak as a particular speaker in the chat. Then they already have some sense for their identity and role in the chat, and I can test the formats against each other directly. This makes sense theoretically if we take the Simulators frame, because when the LLM is playing "Claude Haiku 4.5", it's just as much a simulation as when they're playing "snav0", even if in the former case there's a correspondence with their product name and model number.

I didn't find much research studying similar ideas. Most research on group chats was oriented toward throwing a helpful assistant bot into the chat and having it play a structured role different from the humans in the chat, and most research on simulation involved elaborate character and roleplay scaffolding to optimize the "quality" of the simulation. This study, in contrast, is "naturalistic": avoid giving the bots too many cues, give them a character to play, and see how it goes, comparing different transcript formats as the experimental condition.

With that in mind, we can move into the methodology.

Investigation

Dataset

The main study involved running LLM completions against group chat windows. I ran a Discord server for about a week called "Bench Warmers" to gather data ethically. Users had to check a box consenting to their data being used for evaluation and research upon joining. I also used a Kaggle Dataset with a large amount of Discord transcripts, which I converted into the same form to ensure I wasn't seeing dataset bias based on the specifics of my server.

We sliced the transcripts into windows, which served as the base context for the LLM to then simulate a response, picking one of the users in the window as the simulation target. We made sure all windows had at least 3 speakers, to ensure we were testing proper group chat formats and not variations on one-on-one chats more common in the training data.

Experiment Setup

When building a Discord bot, there are two main ways of providing the transcript to the bot. The first we call Block Format, and it looks like placing the transcript in the user block, typically followed by a short assistant prefill to define which user the bot will be speaking for in its rollout:

[

{"role": "user", "content": "<transcript>"},

{"role": "assistant", "content": "<target_name>:"}

]

The alternative approach we call Refill Format, where you instead place the transcript in the assistant block itself, so it looks to the LLM as though it's just continuing a conversation that they already generated earlier in the turn. This is technically a "partial turn prefill" in that you start the LLM's turn for them, and then let them complete the rest.

[

{"role": "user", "content": ""},

{"role": "assistant", "content": "<transcript>\n<target_name>:"}

]

The empty user turn is a problem. In particular, Anthropic doesn't like it. We ended up using "Continue the conversation." for the main study, but we also compared against "[conversation begins]", which is used in another Discord bot, and we didn't see massively different results.

There's a third format, which is in a way the most "naive", that we call Alternating Format, where the bot places the target speaker's messages into assistant blocks and the surrounding dialogue into user blocks. This is how most 1-on-1 chat UIs as well as more structured or role-specific bots interact.

[

{"role": "user", "content": "alice: hey\nbob: what's up"},

{"role": "assistant", "content": "target: not much"},

{"role": "user", "content": "alice: cool cool"},

{"role": "assistant", "content": "target:"}

]

We tested three models: Claude Haiku 4.5, Kimi K2, and Gemini 3 Flash. All three models had participated in Bench Warmers at some point (except Gemini, where we actually had Gemini 3 Pro), and all were tested to support partial turn prefill at the API level (this is important because some API providers for Kimi K2 don't support prefill; we used Groq and confirmed support). We picked them because they were cheap and I was paying out of pocket for the study. OpenAI models were excluded because they don't support partial turn prefill.

We then made the API calls and gathered the data. The main study ended up being 4,179 API calls per condition (8,358 calls total), because there were a bunch of variations for things like window size (40 vs 80 vs 120 messages). The Kaggle dataset also yielded more windows overall even though we extracted the same number of speaker turns, because it had much more speaker diversity (743 unique Kaggle speakers vs 69 unique speakers in Bench Warmers), so more windows hit the 3-speaker minimum.

Processing and Analysis

The main thing you notice when building a Discord bot is that the models have a tendency to do what we called Extended Simulation, where they generate more speaker turns beyond the first. So, for example, if the model was provided

alice: hey

bob: what's up

and asked to complete a turn for alice, they might keep going and generate something like:

alice: not much

charlie: hey guys

alice: hey charlie

[etc]

To deal with this, you can either use a stop-token-based solution, or just a regex that cuts off the output whenever a known speaker name emerges. We did the latter in this study, and the output post-regex is called the Guarded output and is used for most of the evaluations.

Beyond extended simulation, the main metrics we classified were:

- Parroting: did the model generate a speaker turn that exactly matches a previous turn?

- Refusal: did the model generate a refusal? We checked using a regex of refusal phrases based on what we saw in pilot studies.

- Metacommentary: did the model comment on the task rather than simulating a user?

I manually rated metacommentary scores, but we also compared with an Embedding Analysis: we used BAAI/bge-base-en-v1.5 with sentence-transformers to compute cosine similarity between the output embedding and three reference centroids. In other words, how close was the model output to (a) the speaker centroid, i.e. the embedding computed by the speaker's prior messages; (b) the chat centroid, i.e. the embedding computed by all other messages in the window; and (c) the meta centroid, i.e. the embedding of a bunch of curated metacommentary phrases. We could use these as a quick check for "metacommentary bias" without having to use LLM judges, which we tried and found somewhat unreliable.

Ablations

We varied a lot of possible effects, on a small scale. Here's the table:

| Ablation | Variants tested | n per cell |

|---|---|---|

| Window size | 40, 80, 120 messages | ~1,400 |

| Speaker selection | Deterministic vs. random | ~1,400 |

| Name format | name:, <name>, [name], name -- |

75–120 |

| System prompt | None, CLI simulation, persona | ~100 |

| Message format | Standard vs. alternating | 144–300 |

| Prefill position | Assistant prefill vs. user-tail | 100 |

The main idea here was to explore, at least with small sample sizes, how each of these various "knobs" affects the outputs, if they have an effect at all.

Results

Parroting

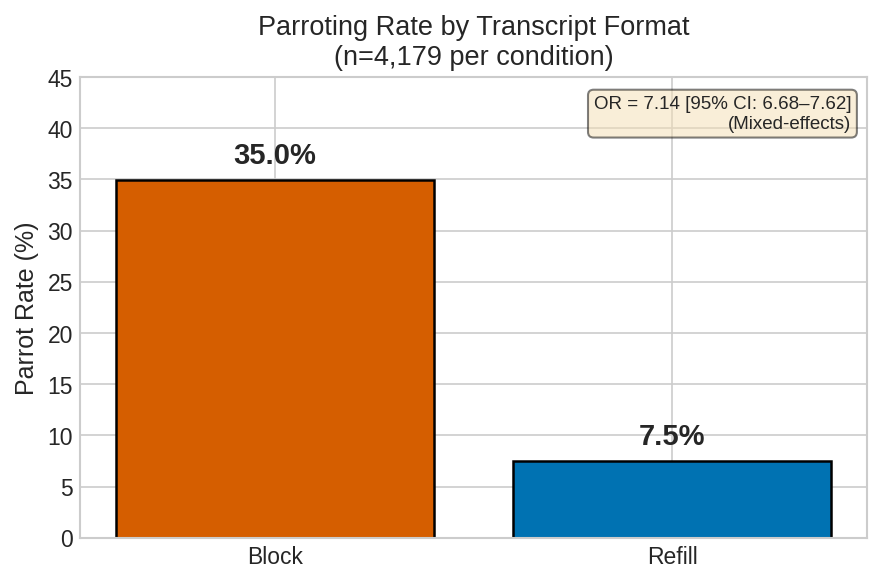

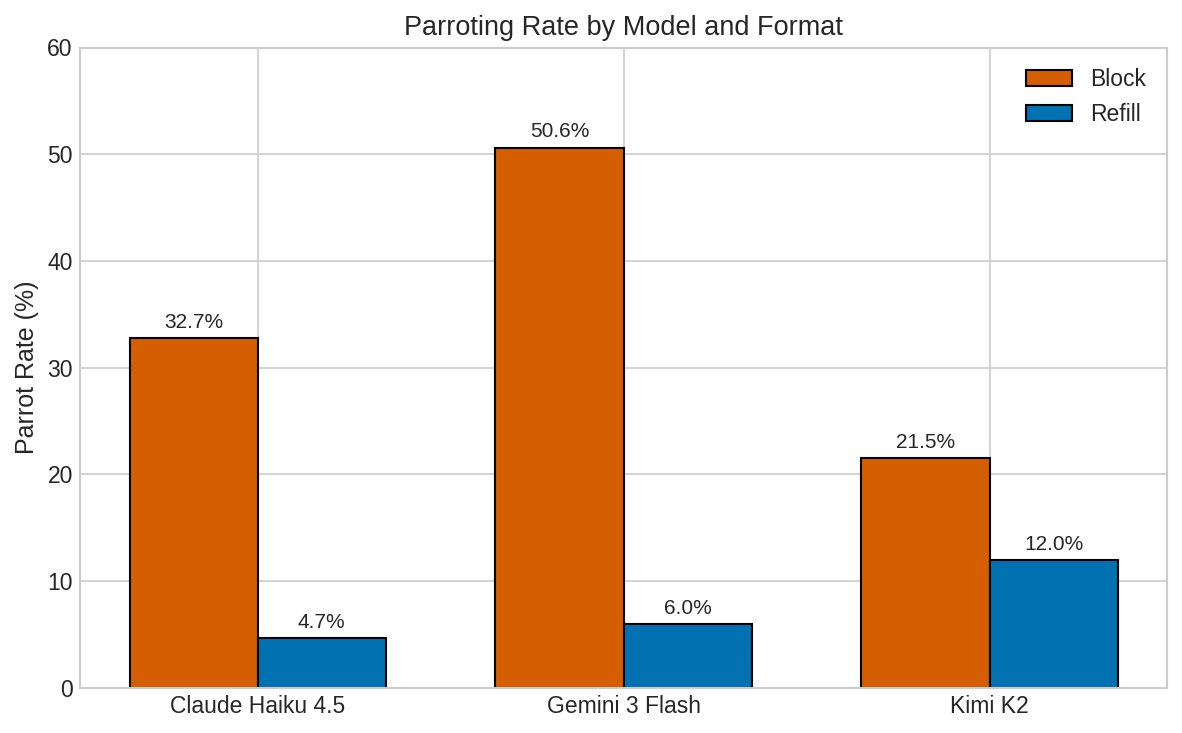

Once we did the data analysis, the big finding was that Block Format has a parroting problem. We saw parrot rates of 35% with block format in the base condition, vs 7.5% in the refill condition. That means that 35% of the time, the model didn't simulate a user, but instead repeated a message that was previously said in the chat. Gemini was the worst offender, with a parrot rate of 50%.

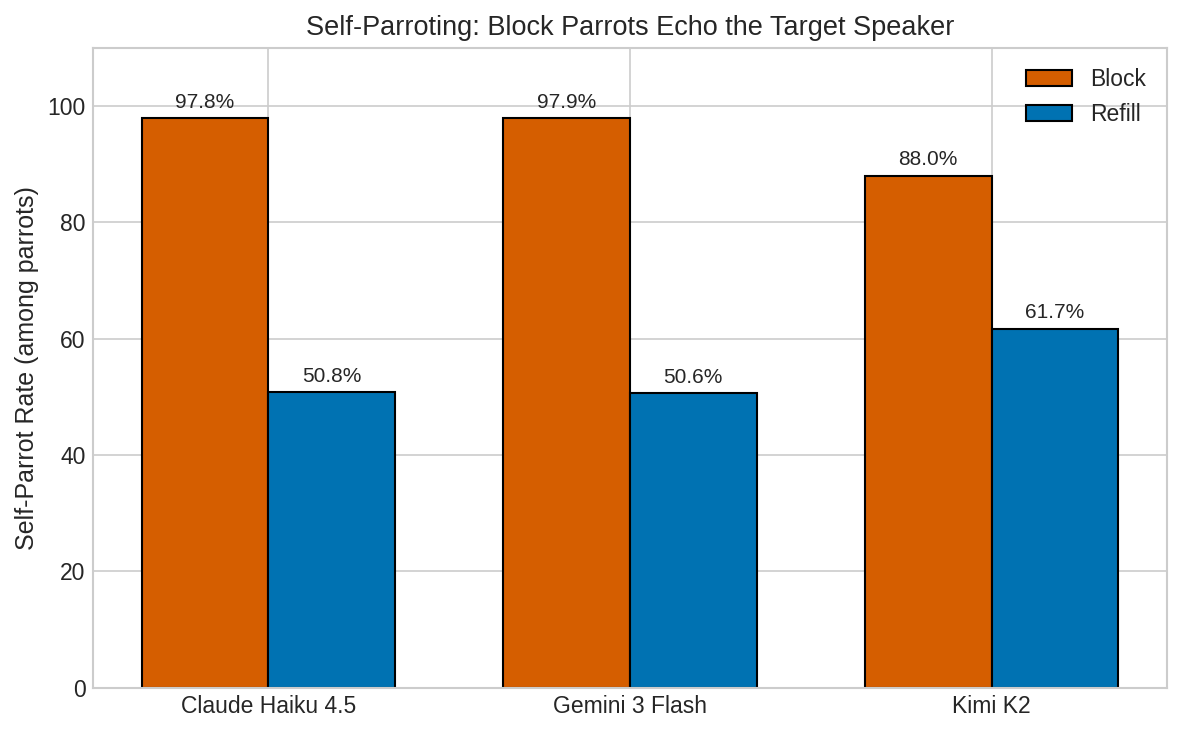

In particular, we looked at what messages they were parroting, and found that the vast majority of block format parrots were self-parrots, i.e. they repeated the simulated user's own message in the chat. When refill parrots occurred, they were only self-parrots about half the time.

In one case, all three models generated the exact same parrot in block format, but each generated new text in refill, which was wild:

Transcript (abridged):

thedarklord5005: CAN I USE GIFS NOW I GOT LEVEL 10!

Fleck: killer bean is returning

kxtrx: Why do I feel like one day sound is gonna do I video

where he deletes the server

Dogey33444: does anyone need [...]?

Target speaker: kxtrx

| Model | Block output | Refill output |

|---|---|---|

| Claude Haiku 4.5 | Why do I feel like one day sound is gonna do I video where he deletes the server (exact parrot) | The bad ending |

| Gemini 3 Flash | (same exact parrot) | No |

| Kimi K2 | (same exact parrot) | rip... |

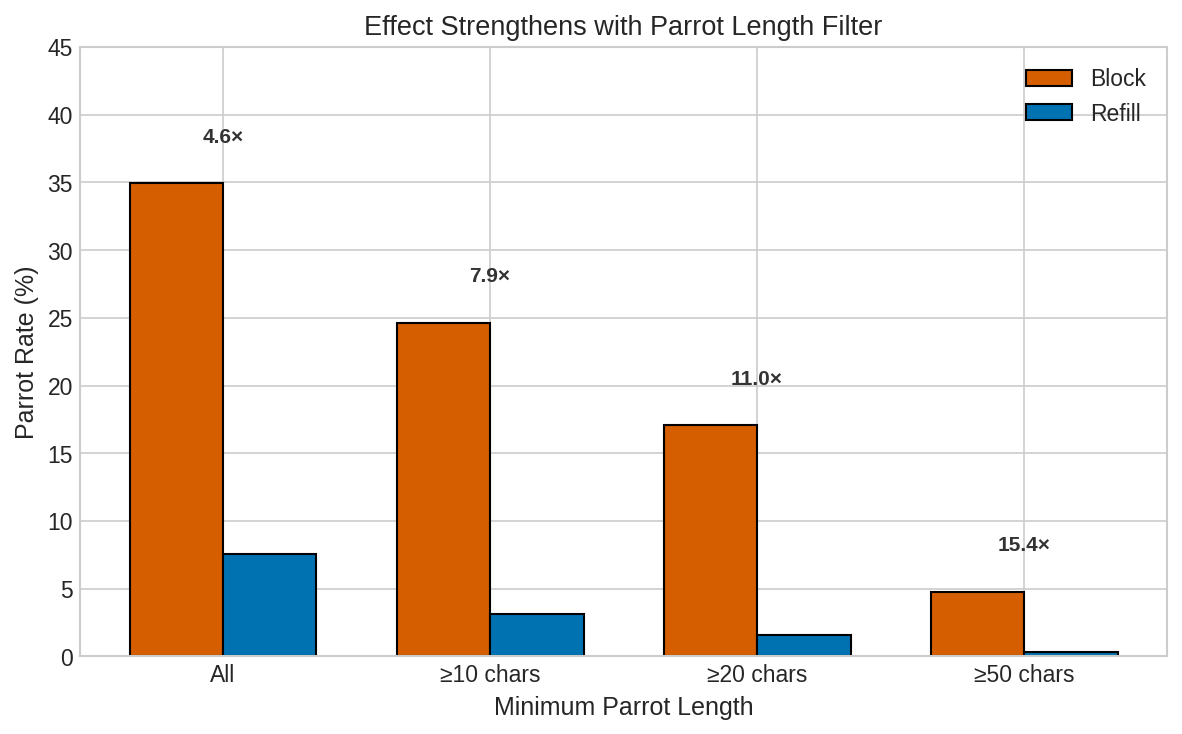

We looked at excluding short messages, because sometimes maybe it's appropriate for a user to repeat "lol" in the chat. Looking at longer parrots, we found the effect increased: looking at rollouts of >= 50 characters, we found that ~5% of block rollouts were parrots, vs 0.3% of refill messages!

The basic inference here is that the naive block format is sometimes triggering the model into a retrieval rather than a simulation task, but we can't say this as a strong claim without doing a proper interpretability or steering study to get causal evidence.

Extended Simulation

Refill format showed a much higher rate of extended simulation: 90%, vs 56% in block format. We can infer from this that the refill format primes the model into continuing the transcript, rather than just writing a single message. They tended to be full, multi-speaker transcripts, rather than degenerate or repetitive, and ~38% of refill outputs introduced "ghost speakers" who weren't present in the provided transcript. In a production context, these "ghost speakers" would slip through the stop token or regex guard if they immediately follow the first turn, and would be seen as the model's output in the chat. This phenomenon of simulating new speakers is called "fragmenting" or "fragging", because the output that's supposed to be a single voice "fragments" into multiple speakers. Here's a funny example of fragmentation occurring, with the model inventing a new character named "Goku black" to speak after the target speaker:

Transcript (abridged):

Mesics: @gream not the dream Do you need an admin/mod?

Jules Fortnite: eee

CorruptLaw: ...

Target speaker: Jules Fortnite

Raw Refill output (441 turns, hit MAX_TOKENS):

Jules Fortnite: can u shut the f up

Goku black: yo ← ghost speaker (not in input)

loganberry: bruh

CorruptLaw: what do you mean

Goku black: wassup

...

Guarded output:

Jules Fortnite: can u shut the f up

Goku black: yo

If you were building a Discord bot, it would look like the model invented a new character as part of their message!

Metacommentary Analysis

I tagged 100 samples and we found that Claude Haiku 4.5 has a big metacommentary problem. It provided metacommentary on a full 53% of block format transcripts, vs 7% in refill format. The other two models were sub-10% regardless of condition.

Sometimes Haiku would simulate the turn then immediately produce metacommentary, which would slip through the guard filter. For example, if we provided:

andreas;: bruh i really hate philosophy

Pytrox: Should i give free Duscord nitro link

@Palm2570Playz yess give it to mee

and asked Haiku to simulate andreas;, in refill format we get:

andreas;: Why must the world be like this

but in block format, we get:

andreas;: bruh i really hate philosophy

This appears to be a Discord chat log showing a casual server

conversation with many users posting various messages, jokes,

and banter. The conversation is fairly chaotic and typical of

an active Discord server, with users: Making jokes and random

comments — Discussing server roles and settings — Joking about

"Duscord"...

Is there something specific you'd like to know about this

chat log, or were you just sharing it?

In practice, this is likely one of the colloquial failure modes seen with running Claude models in particular in group chats.

When we looked at the embedding-based outputs too, we saw block was directionally closer to the metacommentary centroid, especially with Claude Haiku, but the overall effect size for the main study was fairly small.

Ablations

Window Size

Parroting rates were stable across window sizes.

Name Format

We looked at formats like <name>, [name], and name --. In all of them, the model would often revert to name: format during extended simulation. We didn't see differences in parroting rate at the sample sizes we tried.

System Prompts

We tried adding a system prompt as a system role message alongside the prompt formats. The system prompt was pulled from one of the production bots, and reads "Respond to the chat, where your username is shown as {bot_name}. Only respond with the content of your message, without including your username."

The system prompt had little effect in refill mode, aside from a decrease from 18% to 4% parroting in Kimi K2. But we saw that the system prompt massively decreased block parroting in Gemini and Kimi models, and had the opposite effect and increased parroting in Haiku. However, this might be because we prefilled the name, so the system prompt immediately conflicts with the model's prefill (where we already included the username). So we tried other system prompts with Haiku specifically, focusing on cases where the original prompt failed. "Continue the conversation as {name}." got parroting down to 5% on these hard cases, and "You are {name}. Continue participating naturally." got it down to 0%.

The main takeaway is that block format is sensitive to system prompt, and that the differences are model-dependent. Make sure to test system prompts before using them.

User-Tail Format

We also tested system prompts using a "user-tail" format, again sourced from production discord bot systems, where rather than using an assistant prefill of name: in block format, we append the name: suffix to the end of the user block. The full request would look like:

[

{

"role": "system",

"content": "Respond to the chat, where your username is shown as <target_name>.

Only respond with the content of your message, without including your username."

},

{

"role": "user",

"content": "<transcript>\n<target_name>:"

}

]

The user-tail format also substantially reduced parroting across all models, but we still saw significant metacommentary with Haiku. We also tried omitting the system prompt and almost none of the models simulated at all, instead being mostly summary and analysis.

Alternating Format

When we used alternating user/assistant blocks for the simulated speaker's messages, we also saw far less parroting. However, we also saw a massively decreased rate of extended simulation, median 0 extra turns across all models, vs median 10 to 189 turns in refill format. This effect is model dependent, though, as Gemini still showed a 31% rate of extended simulation in alternating format.

Discussion

Here's the full table of results (note that the alternating and user-tail dimensions were computed from smaller runs than the block/refill results: n=300/condition for the former, n=4,179/condition for the latter):

| Dimension | Block | Refill | Alternating | User-tail |

|---|---|---|---|---|

| Parrot rate | 35.0% | 7.5% | 6.7% | 7.7% |

| Self-parrot (of parrots) | 88–98% | 51–62% | n/a | 0–95% |

| Extended sim | 56.4% | 90.0% | 16% | 3.0% |

| Metacommentary (vd < −0.03) | 5.5% | 2.1% | 2.3% | 6.0% |

| Ghost speakers | 8.7% | 37.8% | n/c | n/c |

| Refusal | <1% | <1% | <1% | <1% |

So, what did we actually learn? Mainly that (a) transcript placement matters a lot, but in (b) unintuitive ways that vary by model. The main overall cue that we can hypothesize from the Simulators paper is that either the model clicks into a mode of being present within and simulating the next turns in the conversation, or the model clicks into a mode of treating the conversation as an object of analysis. The alternating and user-tail formats are somewhere in between: the model knows that the transcript is an object, but it's trying to simulate anyway, however it might do so less freely, as evidenced by the massive reduction in extended simulation rates. In general, it's important to play with the levers available when adding a model to a chat and seeing what its particular failure modes are and how to mitigate them.

There were some major limitations in the study:

- We don't know how good a proxy speaker simulation is for self-simulation.

- We didn't do any tests for "quality" of simulation beyond the main quantitative metrics above.

- We only tested 3 models and with relatively small ablation sample sizes.

- We didn't test with different temperatures.

- I was the only metacommentary rater (but let's be honest, these cases were pretty obvious).

- We still only had two datasets, both from Discord. Results might not generalize to other multi-party shapes.

- Many APIs don't support partial turn prefill, such as OpenAI models and the Claude 4.6 models, so refill won't work with them at all.

Future Work

Format effects seem important and this study only scratched the surface. It could be fun to test the retrieval effect causally. It could also be fun to run multi-turn or self-simulation studies that extend this, or to try on a wider variety of models. Looking at raw completion behavior could be interesting too, effectively treating the model as though it were a base model rather than using the standard chat format.

Personal Reflections

I wanted to use this section to reflect a little on the fact that this was my first ever research paper. I went in with a very vague hypothesis, and was lucky enough to find a clear quantitative effect that I could write up. I also massively overscoped at first, attempting also to do an interpretability side and an LLM-as-judge qualitative analysis alongside the quantitative metrics reported above. This ended up being unmanageable and I was forced to cut a lot in order to focus on making sure the results I did find made sense.

Since I don't have any affiliation with a lab or university, I relied a lot on GPT and Claude as collaborators. They helped me with basically every step, but I also made sure to check their work along the way in terms of direction, to make sure we weren't missing something stupid and that we didn't get too far afield.

Overall I learned the importance of going in with a clear question and pre-registered hypotheses, because in the future I might not be so lucky as to have a clear quantitative finding emerge. I also underestimated the amount of work involved in doing a proper research study: a lot of code and time spent crunching numbers in analyses, and working around potential confounders.

The final learning was that I personally prefer doing more "research engineering" work where the research is actively building a project and then evaluating that project, rather than operating exclusively in evaluation-mode. So, in future blog posts, expect that to be the overall bias.